At GDS, we work in the open - whenever we can, we share our work: ideas, decisions, designs, source code, our successes and failures. We do so for the sake of transparency and to give back to the web design community we borrowed so much from.

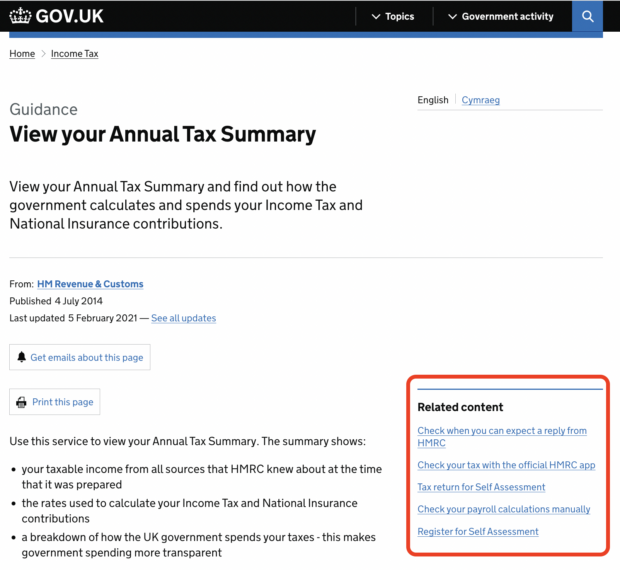

One of the tools we designed in the open is the related content recommender. Many pages on GOV.UK include a “Related content” box that suggests other pages that the user may be interested in. The editor of a page is able to manually specify what content is suggested on it, but by default that content is automatically generated by our related content recommender, which is based on the node2vec algorithm.

On average, about 2% to 3% of visits to a GOV.UK page click on a recommended content link. In the context of almost 4 million average visits per day (May 2022), this makes for quite a popular tool on the site. GOV.UK only captures users who consent to analytics cookies.

But, what if a user wanted to see how content was recommended to them on a page? Sure, they could look at the code on GitHub and read our blog posts on related links, but some technical details about the data we use or the assumptions we make to train the model are missing or not accessible to a non-technical reader.

‘Algorithmic transparency’ means being open and clear about how algorithms and any product or application underpinned by them support decisions. This transparency is essential to build people’s trust.

Participating in the algorithmic transparency pilot

Our team was enthusiastic about putting a newly-developed algorithmic transparency template to the test. This was a pilot organised by the Central Digital and Data Office (CDDO) and the Centre for Data Ethics and Innovation (CDEI).

The template forms part of the Algorithmic Transparency Standard, itself part of the government’s National Data Strategy and National AI Strategy. It aims to improve transparency by standardising the way information is collected about how the public sector uses algorithmic tools.

The template is a structured series of questions that we answered in order to document:

- what our algorithmic tool does and why it is used (in non-technical terms)

- technically, how our tool works, what alternatives we have considered

- what data it uses

- any consideration around equality impact, risk monitoring and ethical assessment

Our answers were then collected by the CDDO and CDEI team and mapped against the algorithm transparency data standard, and was subsequently published on GOV.UK as our output transparency pilot report.

Completing the template was a useful exercise, not just in terms of the output report that may benefit the public, but also for us, the developers and maintainers of the algorithmic tool. It has helped us reflect upon the approach and clarify assumptions. It also helped us spell out the decision-making process that the tool follows, and justify its use.

This will be invaluable knowledge for future colleagues and for future iterations of the tool.

What’s next?

Since the recommender was introduced in 2019, we’ve iterated it multiple times to adapt to changes in the content on GOV.UK and to improve the relevance of the suggested related content.

For instance, the ever-increasing amount of content would cause it to crash, as it exhausted the memory available on the machine running it. We therefore had to improve the performance to make it run on a reasonably-sized machine. We also worked on suggesting better recommendations by testing variations on the original algorithm.

We are looking forward to keeping our algorithmic transparency report up-to-date with any upcoming improvements and changes. We want to demonstrate how we value transparency and openness and set a precedent for how this would work with algorithms.

We encourage all teams who develop or use algorithms in the public sector to complete the algorithmic transparency template. In fact, we recommend it.

Read our transparency pilot report for related content.

Subscribe to Inside GOV.UK to find out more about our work.

The GOV.UK app went live in public beta in July 2025. Find out what’s been happening, and what’s coming next,

The GOV.UK app went live in public beta in July 2025. Find out what’s been happening, and what’s coming next,

2 comments

Comment by Rajitha R posted on

Really interesting to hear about this work!

If we find any particularly strange recommendations on pages would you like to hear about them? My team doesn't have access to change the related content links. We've come across some automatically added ones on pages we manage that aren't related.

Comment by Max Froumentin posted on

Hi Rajitha,

Thanks for getting in touch. Managing editors from departments can get in touch with GDS content designers to amend related links or replace them. You can do that by sending a request through GOV.UK Sign On.

Thanks, Max