In the Core team (one of 4 product teams at GOV.UK) we look after the development and maintenance of many applications behind GOV.UK, including Whitehall Publisher.

We collect lots of data about the usage of GOV.UK and how it performs technically. It helps us do things like assessing development requests, amongst other things.

We’ve identified a need to be more structured in our use of this data, so we've created a simple analytics report. It shows activity in the apps, focusing on how they are used by government publishers.

This is the first time we've collected and interpreted data in such a consistent way for GOV.UK’s publishing tools.

What are publishers doing?

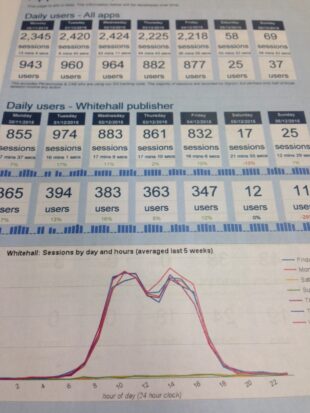

Our first report was really simple and showed who was using the different apps. We produce this weekly so we can start to understand things like:

- how many people use each app every day

- what the busiest day of the week is

- the busiest time of day for publishing activity

Over the last six months, the busiest day and time in Whitehall Publisher by number of users, was between 11am and midday on Thursdays.

This first report helps us understand how the apps are used, and plots activity against longer term trends. Some changes will be seasonal, but this information can also be used to help us do things like deploy code at low impact times, or anticipate an increase in support ticket requests if we spot an increase in new users.

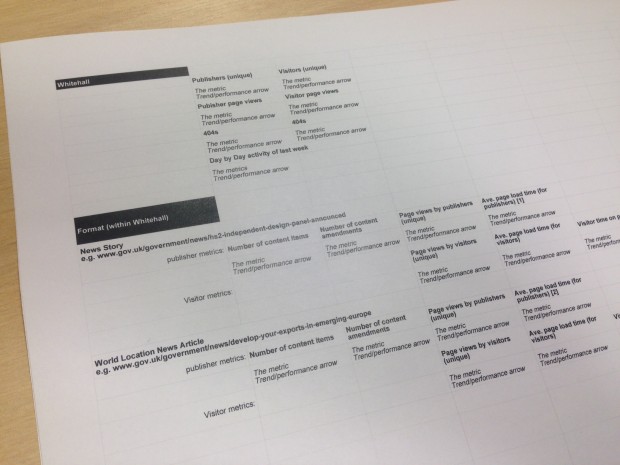

Creating a team dashboard

The next iteration of the report, which we're working on now, will continue to report on the usage of Whitehall Publisher by publishers, but it will also provide information on activity by the public and performance of the apps. That’ll be things like the technical speed of delivery (how fast a page loads), or 404 error pages (how many, and of what type), for example.

The aim of collecting this additional information is so that we can:

- identify problems before they are reported by users

- validate or challenge requests for development or change

- inform future work

Brad Wright has already written about our focus on improving the architecture of GOV.UK and this report will be useful for showing if we are making performance improvements through the architectural migration too. For example, are we seeing an improvement in page load speeds, meaning publishers and the public are waiting less time for information?

We have already started to make observations from data that we want to collect in the next dashboard. For example, on average, there are 74 page views of Whitehall content by the public for every page view in Whitehall by a publisher.

Setting a benchmark

Once we’ve collected data for a few months, the next stage will be agreeing team metrics that we can measure our performance against.

Once we understand what the regular activity of ours products is, we can measure the success of the work we do, and allow us to track changes in user behaviour too. This is important for team motivation because we can see how usage changes dependent on what we do. It also keeps us focussed on the team priorities.

Focus

We're keen to produce a report that only contains information that can affect the work we do within the Core team - we don’t want to get bogged down in data that doesn’t help us.

The report should provide just the right amount of information so that if there are issues or points of interest we can delve into the data. The team dashboard will be something that is quick and easy for us to look at every week.

We'll keep sharing what we learn from this work in future blog posts.

Jennifer Allum is the GOV.UK Core Formats Product Manager. Keep in touch: follow Jennifer on Twitter and subscribe to email alerts from this blog.

The GOV.UK app went live in public beta in July 2025. Find out what’s been happening, and what’s coming next,

The GOV.UK app went live in public beta in July 2025. Find out what’s been happening, and what’s coming next,

2 comments

Comment by E. Brown posted on

Hi Jennifer,

Thanks for this post. It's a part of the service I don't know much about.

In this context: what is an 'app'? b'c I think of apps as icons that appear on my phone. I'm guessing you mean something different.

You mention products too:

"Once we understand what the regular activity of ours products is, we can measure the success of the work we do, and allow us to track changes in user behaviour too."

Is an app the same as a product?

RSVP, cheers,

E. Brown

Comment by Jennifer Allum posted on

Hi Elizabeth,

Thanks for pointing out the need to be clearer on the definition of applications and products.

In this context, by app I mean a piece of software. That application may also, in technical terms, be the product. In the case of GOV.UK though, our product consists of multiple applications, which we use in combination (like components) to manage parts of GOV.UK.

Thanks,

Jennifer